Over the last few posts we’ve explored how AI tools are reshaping the development process. First, we talked about working with AI as a form of modern pair programming—two minds, one keyboard. Then we looked at how frameworks like the BMad Method introduce structure into AI-assisted development. But there’s another important question emerging for engineering leaders: how do we continue to develop engineering talent in a world where tools like Claude and GitHub Copilot can write large amounts of code?

The concern often sounds something like this: If AI can generate code, how will junior engineers learn? Will they skip the fundamentals? Will there still be meaningful work for early-career developers? It’s a reasonable concern, but it may also be based on a misunderstanding. The reality is that the core process for developing engineering talent hasn’t changed nearly as much as people think.

The Problem: Fear That AI Will Replace the Learning Curve

Historically, the way engineers learned on the job was simple and very practical.

A new graduate or junior developer would join the team and receive a small amount of onboarding:

- How the codebase is structured

- How deployments work

- The team’s coding standards

- The tools and frameworks being used

Then the real learning began.

Managers or senior engineers would assign progressively larger tasks:

- Add a field to a form

- Build a small feature

- Extend a module

- Own a subsystem

Through this process, junior engineers learned by doing. They wrote code, made mistakes, received feedback, and gradually built confidence.

When people look at AI today, they worry that this progression disappears. If an AI can generate the code instantly, does the learning opportunity disappear with it?

The answer is no. The learning model is still fundamentally the same.

The Solution: AI as a Learning Amplifier

The key shift is that the junior engineer now works with an AI assistant during the process.

Instead of writing every line of code manually, they collaborate with tools like Claude or Copilot to generate the initial implementation.

But the responsibility for understanding and validating the code remains with the engineer.

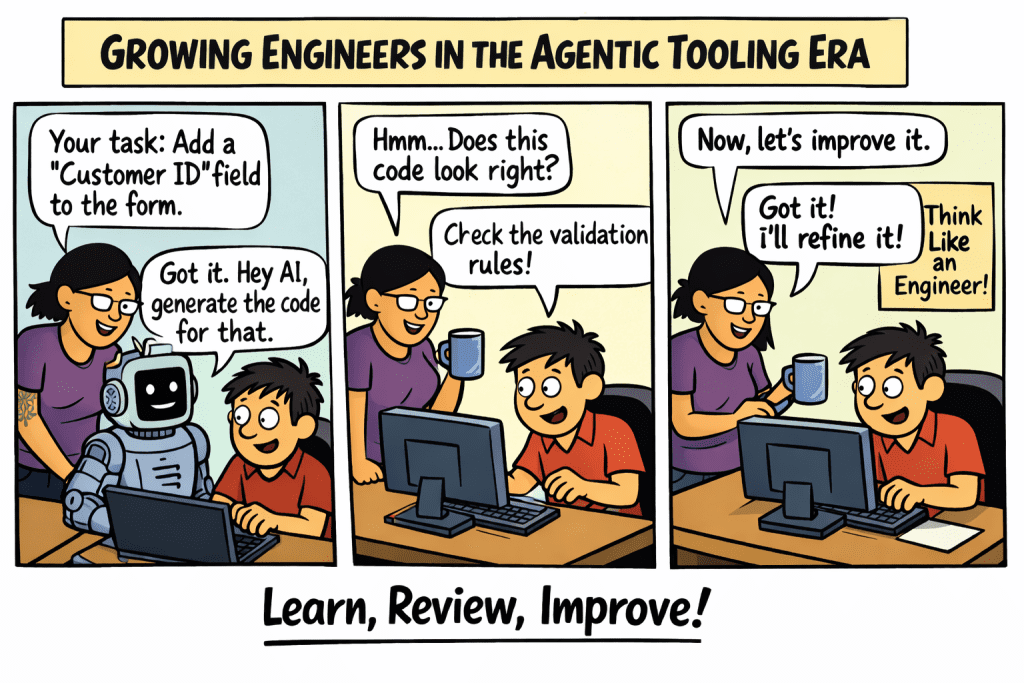

The workflow might look like this:

Step 1: Assign the same small task

The manager still assigns the same type of work:

“Add a new field to this form and store it in the database.”

Step 2: Use AI to generate a starting point

The engineer asks the AI:

“Generate the code needed to add a ‘Customer ID’ field to this React form and persist it to the API.”

Step 3: Review and validate

The critical learning happens here.

The engineer must confirm:

- Does the code follow our coding standards?

- Does it integrate correctly with the existing module?

- Are validation rules correct?

- Does it handle edge cases?

Step 4: Improve and refine

If the generated code doesn’t align with the team’s approach, the engineer refactors it.

This mirrors the traditional learning loop: Attempt → Review → Improve

The difference is that the first attempt may be generated by AI, but the learning still comes from understanding and improving the result.

Evidence: Why This Model Still Works

In practice, many engineering teams experimenting with AI-assisted development are seeing something interesting.

Junior engineers often become productive faster.

Instead of spending hours stuck on syntax or documentation, they can:

- Explore solutions quickly

- Understand patterns faster

- Iterate on ideas in real time

This allows them to spend more time on higher-value learning activities:

- Understanding architecture

- Improving code quality

- Thinking about system design

In other words, AI removes some of the friction around writing code, but it doesn’t remove the need to understand the code.

The same progression still happens:

| Traditional Growth | AI-Assisted Growth |

| Add a field | Generate and validate a field |

| Build a form | Generate and refine a form |

| Build a module | Design and orchestrate a module |

The tasks remain the same—the tools simply accelerate the first draft.

What Changes for Engineering Leaders

The biggest shift may not be for developers at all—it may be for engineering managers.

If junior engineers can move faster with AI assistance, it becomes possible for a single manager or senior engineer to support more developers than before.

Instead of reviewing every line of code, leaders focus on:

- Architectural guidance

- Code quality standards

- System design decisions

- Mentoring engineers on judgment and tradeoffs

In this environment, the role of leadership evolves from code oversight to engineering coaching.

The goal becomes teaching engineers how to:

- Prompt effectively

- Evaluate generated code

- Align outputs with engineering standards

- Think critically about design decisions

The Future: Same Journey, Better Tools

Despite all the excitement around AI, the core journey of becoming a great engineer hasn’t changed.

Developers still learn by:

- Solving real problems

- Iterating on solutions

- Receiving feedback

- Gradually taking on more responsibility

The difference is that the tools available today can dramatically accelerate the process.

For organizations building teams in the agentic tooling era, the opportunity is clear:

- Continue hiring and developing junior engineers

- Integrate AI into the learning process

- Focus mentorship on judgment rather than syntax

Because even in a world of AI-generated code, great software still depends on great engineers.

And the best way to build great engineers is still the same as it’s always been:

Give them real problems to solve, support them as they learn, and let them grow.